C++ Resources, Lifetimes, and Ownership

January 30, 2020

Talk for the Inaugural DC C++ User Group Meeting

C++ for Fun and Profit

August 29, 2019

A presentation to West Point math and computer science cadets about C++

C Constructs That Don't Work in C++

April 28, 2019

A Survey of the C Constructs That Don't Work in C++

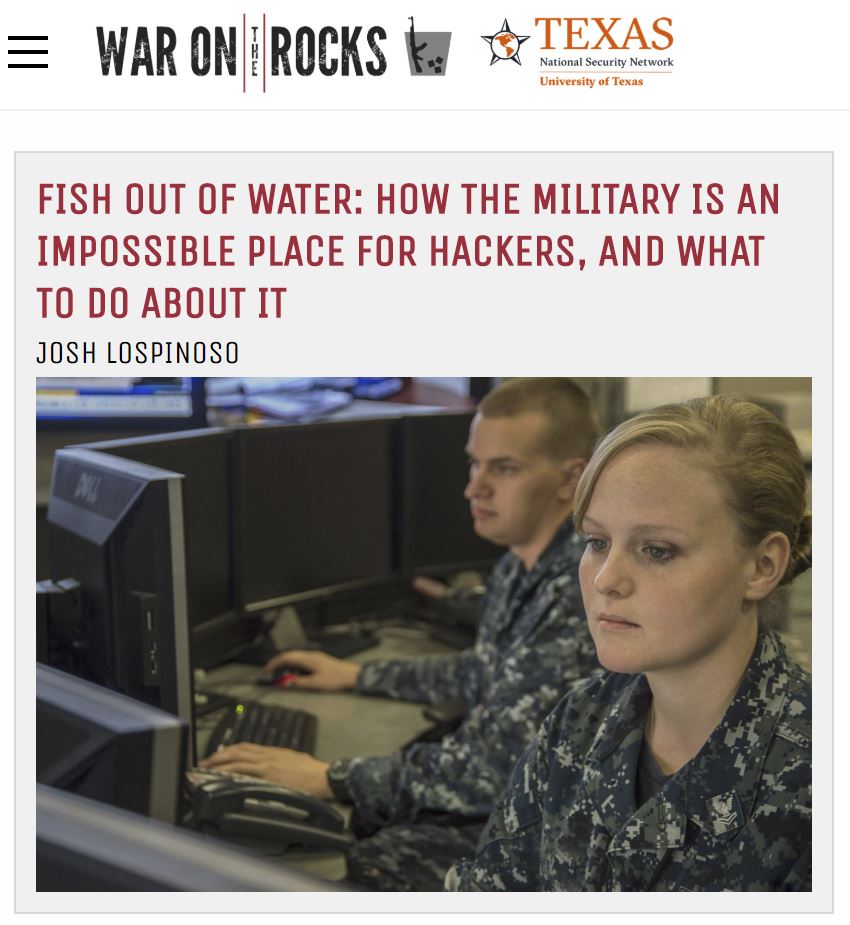

Fish Out of Water (External Article)

July 12, 2018

How the Military Is an Impossible Place for Hackers and What to Do About It

Video Presentation of NGPVAN Hack, Unfurl, and Abrade

February 25, 2018

Vulnerability Patched in Democratic Donor Database, BSidesNOVA 2018 Presentation

unfurl, An Entropy-Based Link Vulnerability Analysis Tool

February 8, 2018

A Screening Tool for Analyzing Entropy of Link Generation Algorithms

Vulnerability Patched in Democratic Donor Database

October 16, 2017

Weak URL Generation for Email Subscription Management Exposed Democratic Donors Emails to Attack

Abrade, a high-throughput web API scraper

September 15, 2017

A TLS- and SOCKS-5-friendly web-resource scraper built on asyncronous network I/O.

gargoyle, a memory scanning evasion technique

March 4, 2017

A technique using tail calls, a ROP gadget, and a stack trampoline to evade memory scans

snuck.me, an open-source service detecting SSL man-in-the-middle

February 20, 2017

snuck.me allows users to compare legitimate SSL certs with whatever their browser is getting.

Rivestment: A Game of Hash Collisions

December 18, 2016

Rivestment is a Slack-chat integrated, multiplayer game for aspiring programmers.

Defeating PoisonTaps and other Rogue Network Adapters with Beamgun

November 30, 2016

New and improved Beamgun now defends against USB network adapters and mass storage!

Defeating the USB Rubber Ducky with Beamgun

November 15, 2016

Introducing Beamgun, a lightweight USB Rubber Ducky defeat for Windows

Setting Up a Matterbot with Slack via ngrok

October 14, 2016

Running a Matterbot on Slack with ngrok

Underhanded C Contest Submission (2015)

February 28, 2016

Using a typo to dork a fissile material test

Sending Subliminal Messages via Twitter Retweets

February 6, 2016

Use cryptographic hashing to send subliminal messages via retweets.

dailyc - A Batch Multimedia Message Service

September 14, 2015

Send multimedia messages using dailyc

Common x86 Calling Conventions

April 4, 2015

Understanding cdecl, stdcall, and fastcall is critical to understanding x86 assembly

Lambda expressions and C++11

March 11, 2015

Using lambdas can make for some wonderfully elegant code

Getting Started with Reverse Engineering

March 6, 2015

Basic static analysis with strings, dumpbin, and IDA

Getting started with Vim

February 28, 2015

Vim is an excellent tool to master, but it has quite a learning curve

Configuring Wireshark on Ubuntu 14

February 11, 2015

Wireshark can be configured to work without having root privileges

Visual Studio 2013 and gmock v1.7.0

January 22, 2015

gmock is an excellent unit testing framework, but it takes some effort to get setup

Tools for fixing symbols issues in WinDbg

January 12, 2015

Symbols can give some trouble when WinDbg is first installed

Getting a Local Kernel Debugger Running

January 11, 2015

A brief walkthrough for WinDbg in local kernel mode